Does clean code still matter?

Revisiting Robert C. Martin's principles in the age of AI

Intro

Robert C. Martin published Clean Code in 2008. For many of us, this book was one of the best references we had when learning to code. It shaped how an entire generation thought about software quality: naming, functions, testing, and the discipline of writing code that others can read.

Almost two decades later, AI models generate entire applications in minutes. Many developers now spend more time reviewing and prompting than writing from scratch. A reasonable question surfaces: does clean code still matter?

I think it matters even more now. Here's why.

What holds up

The book's central thesis is simple: code is read far more than it is written.

The ratio of time spent reading vs writing is well over 10 to 1. Making it easy to read makes it easier to write.

For many teams in 2026, this is even more true. Developers now read AI-generated code constantly, and review takes a larger share of the work. The reading-to-writing ratio has only increased.

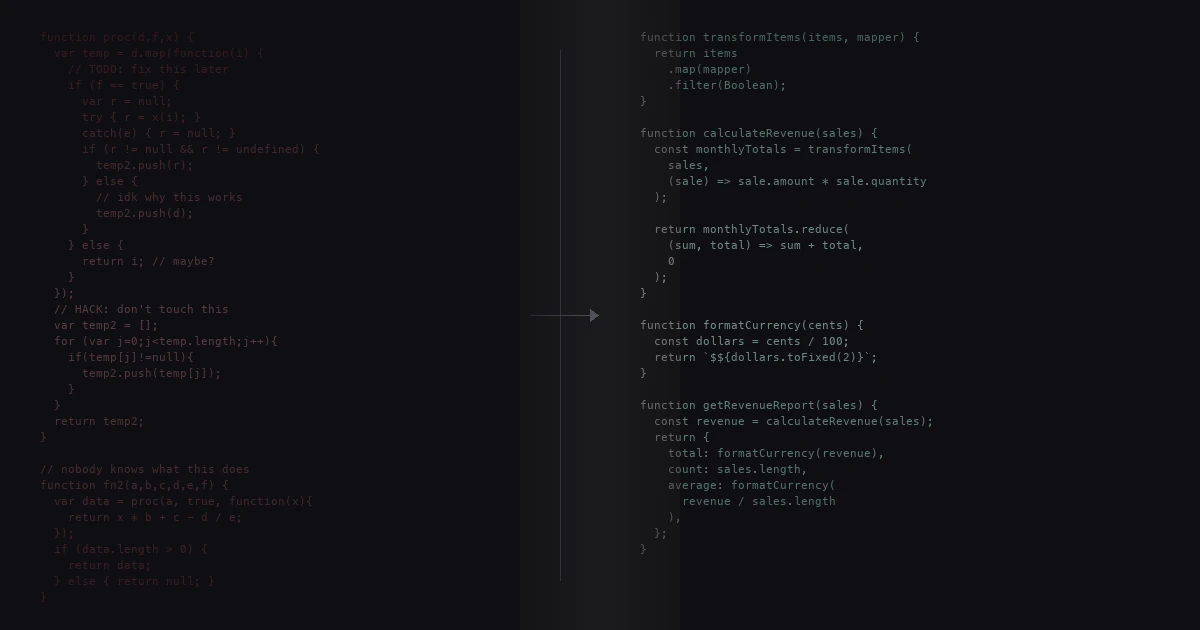

Naming is arguably more important now. When an LLM generates code, meaningful names are the primary way a reviewer understands intent. processData tells you nothing; calculateShippingCost tells you everything. LLMs are happy to produce temp, data, and handler if you let them. The principle still needs enforcing.

You should name a variable using the same care with which you name a first-born child.

LLMs won't apply this care unless you prompt them to, or catch it in review.

Small, focused functions remain practical. When an AI generates a 200-line function that does five things, a developer who has internalized this principle knows to break it apart, or to prompt for smaller functions from the start.

Functions should do one thing. They should do it well. They should do it only.

The Boy Scout Rule becomes essential in AI-heavy codebases. Models don't have a holistic view of your project. They solve the immediate problem without seeing how it fits into the larger architecture, leading to inconsistent patterns and duplicated logic. Entropy increases faster, so "leave the campground cleaner than you found it" is how you counteract the drift.

Comments as failure is particularly relevant when working with AI. Models generate comments prolifically, often restating what the code already says. "Don't use a comment when you can use a function or a variable" applies to AI-generated code just as much as human-written code.

Testing discipline holds up too. LLMs generate tests quickly, but they tend to test the implementation rather than the behavior. They write tests that pass, not tests that are meaningful. The principle that "test code is just as important as production code" keeps AI-generated test suites honest. More importantly, tests are now the feedback loop for AI agents. If you have a solid test suite, you can ship AI-generated changes with confidence because the tests verify the agent didn't break anything. Without tests, you're trusting the model blindly. In an AI-driven workflow, tests become the primary safety net for autonomous changes. They're the contract that AI must satisfy and the most reliable automated signal that generated code still behaves as intended. Clean, well-structured tests aren't just good practice anymore, they're what enable you to let AI agents make changes with far less manual checking.

Why clean code matters even with AI

But these principles were written for human-written code. What about code that's generated by AI?

The strongest challenge: if models can generate and refactor code instantly, why constrain how it's written?

You still might need to read the code. AI handles the head of the distribution: the common, well-understood tasks that make up most of the work. But software has a long tail. Edge cases, subtle bugs, and domain-specific behavior the model has never seen still land on you. The long tail of things only humans can do is wide. When you're deep in that tail, debugging something the model got wrong, the code needs to be readable.

AI works better with clean code. If your codebase is a mess, the AI will pattern-match against the mess and produce more mess. Clean code creates a virtuous cycle.

"First make it work, then make it right" still applies. AI gets you to "it works" faster, but the "make it right" step doesn't disappear.

Getting software to work and making software clean are two very different activities.

Wrapping up

The core message of Clean Code, that professional developers take responsibility for readability and maintainability, is more relevant in 2026 than in 2008.

Without these principles, AI-assisted development quickly degrades into AI-slop: code that compiles and passes tests but that no one can read, maintain, or trust. The more we delegate writing to models, the more we need a clear standard for what good code looks like.

One difference between a smart programmer and a professional programmer is that the professional understands that clarity is king.

The old software fundamentals haven't changed. I don't think they ever will.